PM Life as Agents Take on More

I watched Nate B Jones break down what happens when companies lay off managers. Then I walked outside and had a sidewalk conversation that stress-tested the entire framework. Three people, three roles, three different relationships with AI.

TL;DR

I had a sidewalk conversation with two neighbors that turned into a real-time debate about AI replacing jobs. Then I watched a Nate video that gave me the exact framework to explain why all 3 were right — and wrong. One neighbor is a project manager already using AI daily. One is a business analyst who coaches companies. One of my neighbours’ husband — a skeptic — is convinced AI cannot do creative work. All 3 are right in their position, and also all of us wrong, depending on which management function you are talking about.

“The question is not whether AI replaces your job. The question is which part of your job is routing, which part is sensemaking, and which part is accountability.”

The Sidewalk, Then the Video

I was walking home yesterday when I ran into two of my neighbors. One asked me a completely unrelated question — “Do you prefer talking on the phone or not?“

I walked into that conversation cold. No context. No lead-up. Just a question out of nowhere — exactly the way our AI agents wake up every session. And just like an agent handling a simple routing request, I could respond immediately because the question was straightforward. The information was all there. No deep context needed.

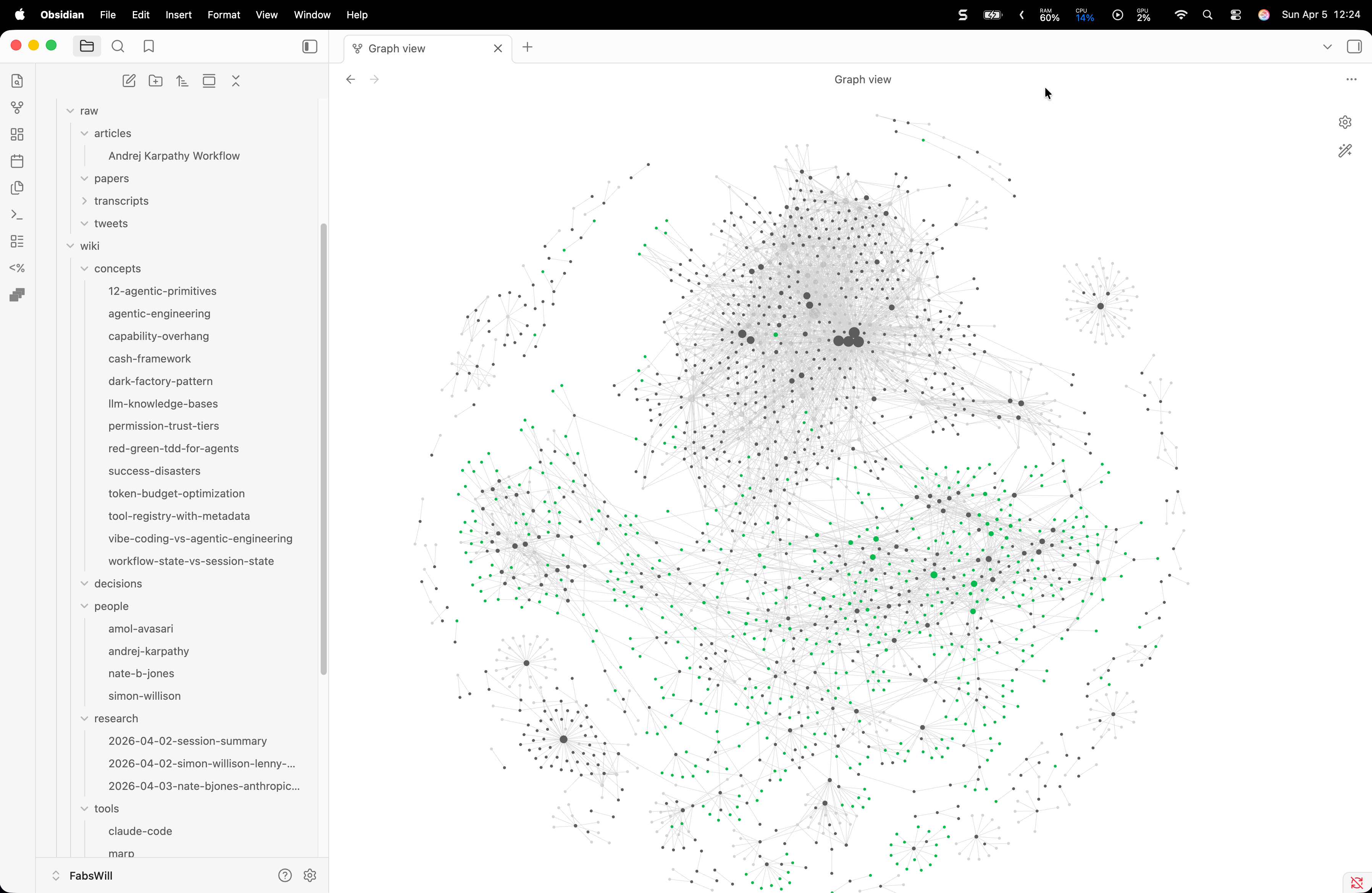

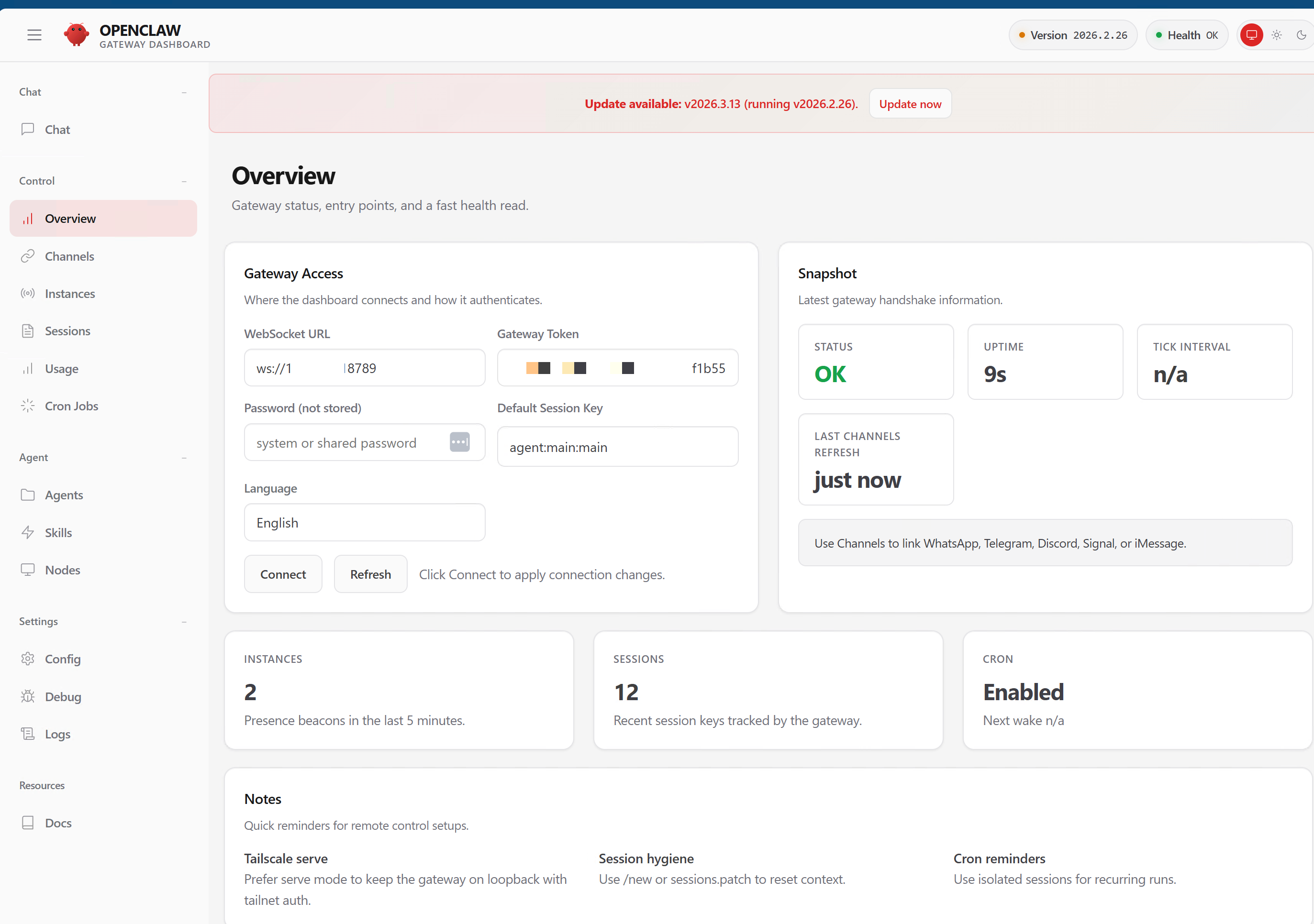

But then the conversation evolved. Within ten minutes we were deep into why I am on the phone in the first place, ‘i.e. the convo got more nuanced and more complex’ how much of my daily work could be automated, and what I am actually doing with the autonomous agents I build — Ada for family operations and Mimi for MACONA, a 501©(3) nonprofit. I shared what the agents handle. I showed them the blog. I talked through what I built and what it replaced.

That is when things got really interesting. As we went deeper, our different professional experiences started shaping our conclusions. I build AI for a living and use it both at home and at the office. My neighbors bring completely different lenses — project management, business analysis, healthy skepticism. The same conversation, the same facts on the table, but three different readings of what it all means. That is sensemaking. And it requires context that a cold start does not give you.

Then the husband of one of my neighbors came out. And the conversation shifted again.

The very next day i.e. today… I watched Nate B Jones break down what happens when companies lay off their managers — 3 case studies, 3 different approaches, all hitting the same structural wall. The thesis is clean: management is not one job. It is three jobs bundled together. And AI is only ready for one of them.

And I realized: the 3 people I was standing with on that sidewalk each embodied a different piece of this framework.

Three People, Three Lenses

Here is what made this interesting. I was standing on a sidewalk with 3 people who each embody a different relationship with AI in their work.

The project manager already uses AI heavily for her tasks. She gets it. She is living the first wave — letting AI handle the information routing, the status updates, the task management noise. She did not need convincing.

The business analyst coaches companies on building their operational models. He sees the potential but thinks about it through the lens of process design and client work. For him, the question is less “will AI take my job” and more “how do I integrate this into the frameworks I already use.“

The skeptic has firm opinions. AI can automate, sure. But creative work? Strategy? Judgment calls? He does not think AI is getting there. And honestly — he is not entirely wrong.

The Framework That Explains All Three

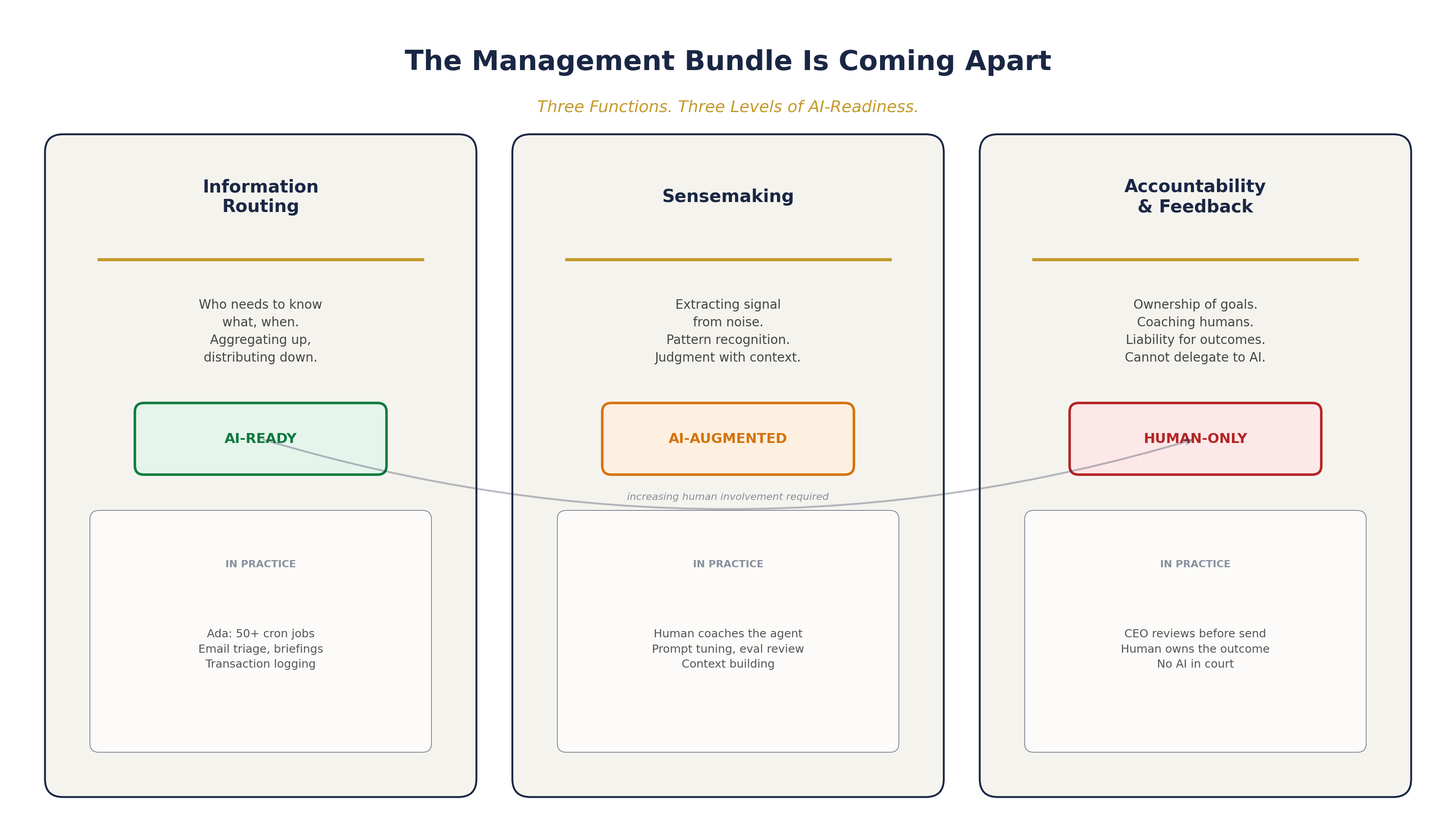

What Nate lays out — and what I have been seeing firsthand — is that management has always been three distinct functions masquerading as one role:

| Function | What It Is | AI-Readiness |

|---|---|---|

| Information Routing | Who needs to know what, when. Aggregating up, distributing down. | Done. Table stakes. This is gone. |

| Sensemaking | Extracting signal from noise. Interpreting patterns. Making judgment calls with domain context. | Middle of the road. Improving fast but not there yet. |

| Accountability and Feedback | Ownership of goals over time. Coaching. Human liability for outcomes. | Not happening. You cannot take an AI to court. |

My neighbor the project manager? She is already offloading information routing to AI. That is the easiest win. The business analyst is operating in sensemaking territory — and he is right that AI augments but does not replace that work yet. The skeptic is anchored on accountability and creative judgment — and he is right that those remain fundamentally human.

The problem is that most people — and most companies — talk about “AI replacing jobs” as if a job is one thing. It is not. It is a bundle. And the bundle is coming apart.

What I Am Seeing with My Own Agents

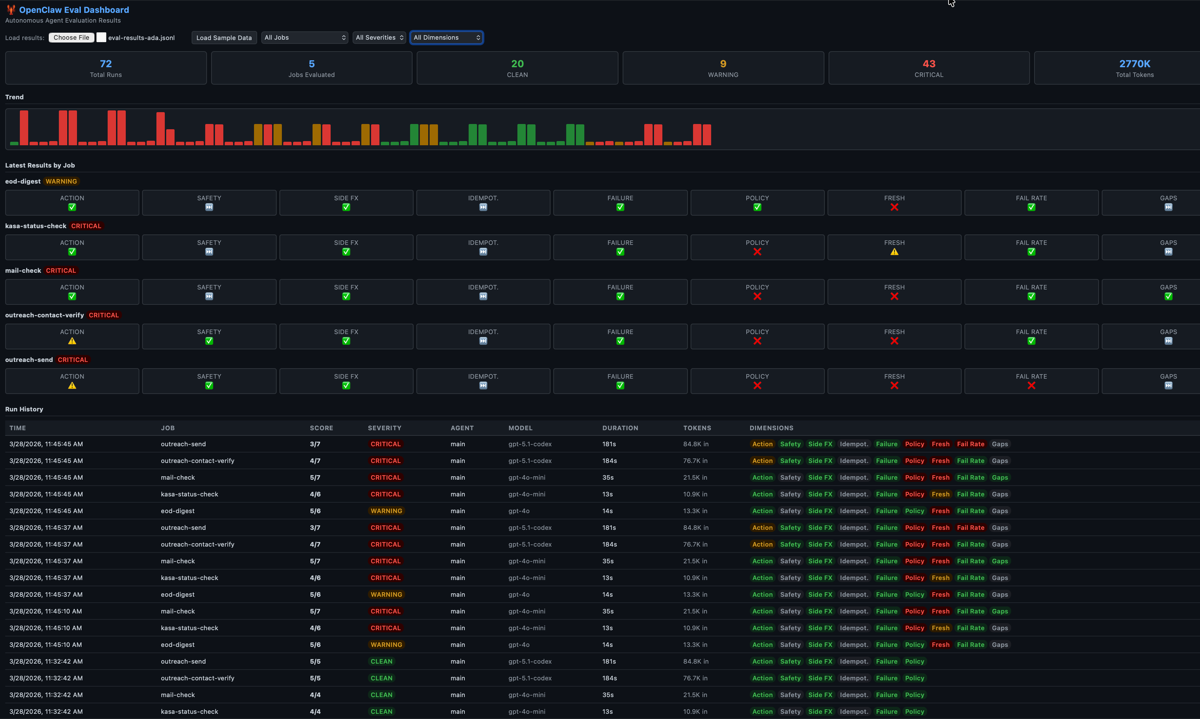

I run this experiment every day across two production systems.

The nonprofit case is where the business value is clearest. MACONA is a 501©(3) that serves women and children in West Africa and in the greater Baltimore area. Before agents, the CEO — Mimi — spent her mornings triaging email, drafting newsletter content, researching corporate matching programs, and manually posting to social media. That is pure information routing work consuming hours of executive time.

Now, Mimi’s AI assistant handles morning inbox briefings via Microsoft Graph, publishes blog posts through an automated content pipeline — I wrote about the full system here — drafts branded email campaigns with Brevo using test-send approval gates, cross-promotes content to LinkedIn, Twitter, and Facebook via Buffer, and researches Benevity corporate matching programs for the SDR pipeline. All deterministic. All with 15 hardened Python scripts handling the API mechanics while the AI handles the creative work.

That is routing done right. The CEO went from spending her mornings triaging email to reviewing the agent’s briefing and focusing on what actually matters — donor relationships, program strategy, community impact. The sensemaking and accountability stayed with the human. The routing went to the machine.

On the family side, Ada handles 50+ cron jobs daily — morning briefings, email triage, financial transaction logging, device monitoring, outreach campaigns. But as I wrote about earlier today, it is not that the AI does not forget. It is that without explicit instructions and deterministic workflows, the agent will improvise — sometimes brilliantly, sometimes disastrously. Every new context window is a blank slate. Persistent memory is not built in. You have to build it yourself with SOUL files, state restoration, and pipeline health checks.

The coaching loop — adjusting prompts, tightening evals, adding context, correcting outputs — that is sensemaking work. And it is still me doing it. The agent does not improve on its own. It improves because I invest in it.

What Went Wrong in the Conversation

I will be honest — I oversold it a little. When you are excited about what you have built, the temptation is to make it sound like agents can handle everything. My skeptical neighbor pushed back, and he was right to push back.

The gap I glossed over is the sensemaking layer. The agents can route information beautifully. They can summarize, categorize, prioritize, and distribute. But when I get a piece of information that requires me to connect it to something I know from ten years of product management experience — a pattern I recognize, a political dynamic I can read, a technical risk I can feel — the agents do not have that. Not yet.

The companies in Nate’s analysis that are getting this wrong are the ones who assume that because AI handles routing, they can just eliminate the people who were doing routing plus sensemaking plus accountability. They compress the bundle instead of decomposing it. The result is burnout, attrition, and the exact cultural strain that makes organizations brittle.

At MACONA, we learned this the hard way. The agent would draft campaign emails that were technically correct but emotionally flat. It would research companies and present data that was accurate but lacked the nuance of knowing which contacts are warm vs cold. The sensemaking gap is real — and closing it requires continuous human investment, not just better models.

Lessons

Decompose before you compress. Do not assume a job is one function. Map which parts are routing, which are sensemaking, and which are accountability. Automate routing. Invest in the other two.

AI coaching is sensemaking in disguise. Every time you correct an agent, adjust a prompt, or refine an eval — you are doing sensemaking work. That work has value. Name it.

Accountability requires legal standing. Until there is a framework for who is liable when an AI’s output causes harm, a human has to own the outcome. This is not a technical limitation. It is a legal and social one.

The skeptics are not wrong — they are just scoped to one function. When someone says “AI cannot replace judgment,” they are talking about sensemaking and accountability. They are correct. When someone says “AI is already doing my job,” they are talking about routing. Also correct.

Your agents get better because you do the sensemaking. The agents do not improve on their own. They improve because you invest in coaching, evaluation, and context-building. The human in the loop is not a safety net — it is the engine.

Why This Matters Beyond My Sidewalk

Nearly half of US companies removed a management layer in the past year. Most of them did it because “AI can handle management now.” But AI can handle routing. It cannot handle sensemaking or accountability — not at the level that keeps an organization healthy.

If you are a PM, a project manager, an analyst, or anyone whose job involves routing information between people — the honest answer is that part of your work is being automated right now. Today. The question is whether you are also doing sensemaking and accountability work, and whether your organization recognizes the difference.

The companies that will thrive are the ones that decompose the management bundle deliberately — like Block’s DRI model, where specific people own specific cross-cutting problems for fixed periods, with full authority and a time boundary. The ones that will struggle are the ones that just cut heads and hope the AI fills the gap.

Here is the real story: the management bundle has been held together by tradition, not by logic. AI is the force that finally pulls it apart. What you do with the pieces determines whether your organization gets faster or just gets more fragile.

Cheers, Fabian Williams

I build autonomous AI agents and the operational infrastructure to keep them honest — for nonprofits, small businesses, and enterprise teams. If you are thinking about how AI changes the shape of your team — or if you just want to argue about it on a sidewalk — I would like to hear from you.

- Blog: fabswill.com

- LinkedIn: fabiangwilliams

- Twitter/X: @fabianwilliams

- MACONA: macona.org